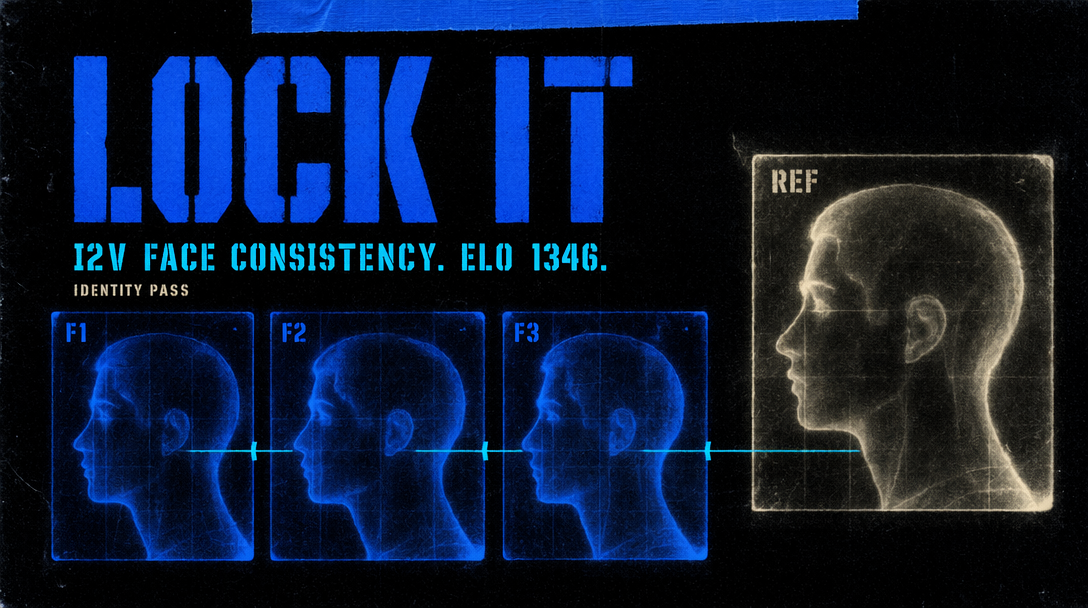

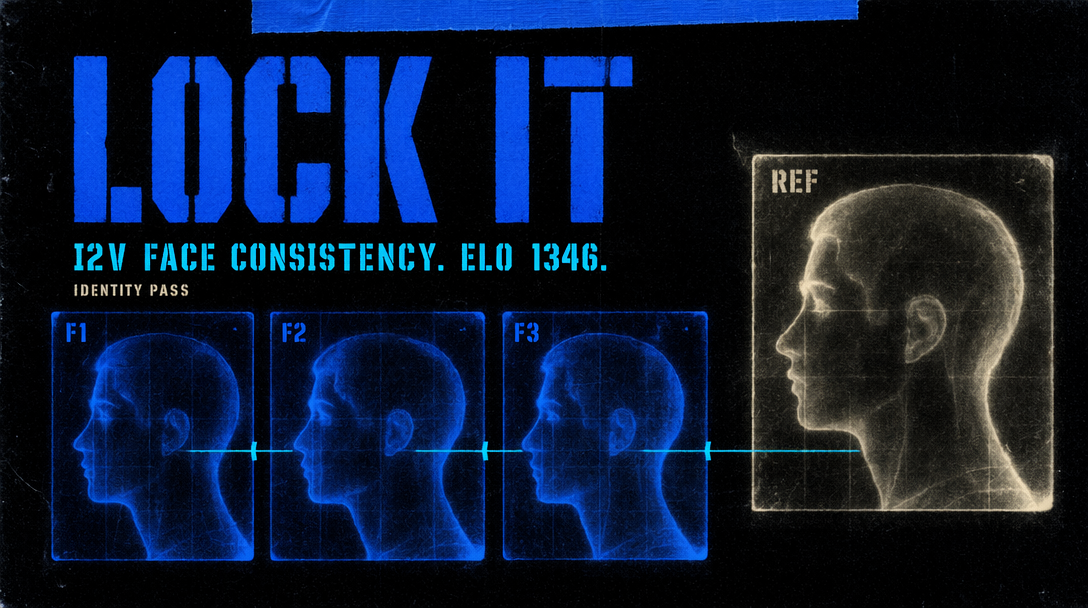

Image-to-Video: Character Consistency Patterns That Hold

Seedance 2.0 image-to-video holds wardrobe, face, and lighting for a full 15 seconds when you feed it the right still. The still does more work than the prompt.

Seedance 2.0 sits at 1346 Elo on the Arena image-to-video board, 76 points above its own text-to-video score. The shared attention layers treat the reference image as a spatial prior the prompt conditions on rather than competes with. Feed a clean 720p still, write a short motion prompt, and you get a 15 second clip where the character still looks like the character at second 14.

That stability is the point for brand work. Generate the hero. Freeze frame 180. Feed it to clip two. Outfit holds. Face holds. Key light holds. Three stitched clips read as one continuous scene.

What the still actually does

The reference frame on bytedance/seedance-2.0/image-to-video is the first frame of the output, and the model plans the next 360 frames outward from it.

- Wardrobe pattern and silhouette. The jacket you photographed is the jacket that moves.

- Face geometry. Cheek shape, jaw angle, eye spacing carry across the full duration.

- Hair placement and texture. Where strands fall in frame one is where they start moving from.

- Lighting direction. Key light stays where you put it.

- Focus plane. The region that is sharp stays sharp unless the prompt names a focus pull.

What the still cannot fix: poor composition, a crop that cuts the face at the forehead, low resolution that forces the model to hallucinate texture in the first second.

Why lighting on the still beats detail on the still

This is the part most teams get backward. You can hand Seedance 2.0 a slightly soft reference and get back a crisp clip. You cannot hand it one with blown highlights or crushed shadows and get back a clip that reads as consistent. The model uses light direction as scaffolding. A still with chaotic light, bounced fluorescents plus window fill plus a phone flash, gives conflicting information about where the key lives. The clip wobbles as each frame renegotiates.

Stable lighting: a single key at 45 degrees with soft fill and one practical in frame. A softbox camera left. Window light with a bounce card.

Unstable lighting: three overhead office tubes. Mixed daylight plus tungsten. Any setup where shadows on the face point in two directions. Fix the light before you worry about megapixels.

The prompt that goes with the still

I2V prompts run shorter than T2V prompts. The still carries subject, setting, and lighting. Your job is to describe what changes across the 15 seconds. Five to twelve words is the sweet spot.

Examples that land:

- camera pushes in slowly, subject tilts head toward window

- wind lifts hair, subject looks up past the lens

- rain begins falling, subject steps forward one pace

- camera orbits right 20 degrees, subject follows the motion

Above roughly fifteen words, text conditioning competes with image conditioning for attention budget, and identity drifts in the back half of the clip.

A working call

01import { fal } from "@fal-ai/client";0203const result = await fal.subscribe("bytedance/seedance-2.0/image-to-video", {04 input: {05 image_url: "https://your-cdn.example/hero-still-720p.jpg",06 prompt: "camera pushes in slowly, subject tilts head toward window",07 duration: 15,08 resolution: "720p",09 seed: 4210 },11 logs: true12});

Hold the seed while you iterate the motion prompt. Composition stays locked, only the movement varies.

Stitching multiple shots

- Generate clip one from your reference still. Pick the seed that landed.

- Extract the final frame at native resolution (ffmpeg

-sswith the duration and-frames:v 1). - Feed that last frame as the start frame of clip two with a fresh short prompt.

- Repeat for clip three using the last frame of clip two.

Do not downsample between clips. If the first clip ended on a closed-eye frame, go back a few frames and extract from a cleaner moment.

When a clip looks broken, check the still

- Identity drifts by second 10. Lighting too mixed. Reshoot.

- Wardrobe warps after 5 seconds. Pattern too fine for 720p.

- Face softens. Still was not sharp at face. Re-crop onto the eyes.

- Background morphs. Busy bokeh. Shoot against a plain backdrop.

- Hair turns to paste. Lift shadows on the reference.

One dominant light and a 5 to 12 word motion prompt is the recipe.